Every enterprise functions on a binary substrate: the rigid, orderly world of structured data and the chaotic, expansive universe of unstructured data. On one side sit the precise rows and columns that balance ledgers and track inventory. On the other lies the flood of emails, contracts, and sensory inputs that capture the nuance of human communication.

For decades, systems were designed to optimize the orderly side while leaving the “messy” data to accumulate in dark silos. This segregation is now a strategic liability. With unstructured assets accounting for 80% to 90% of all information, the vast majority of potential business value is effectively hidden.

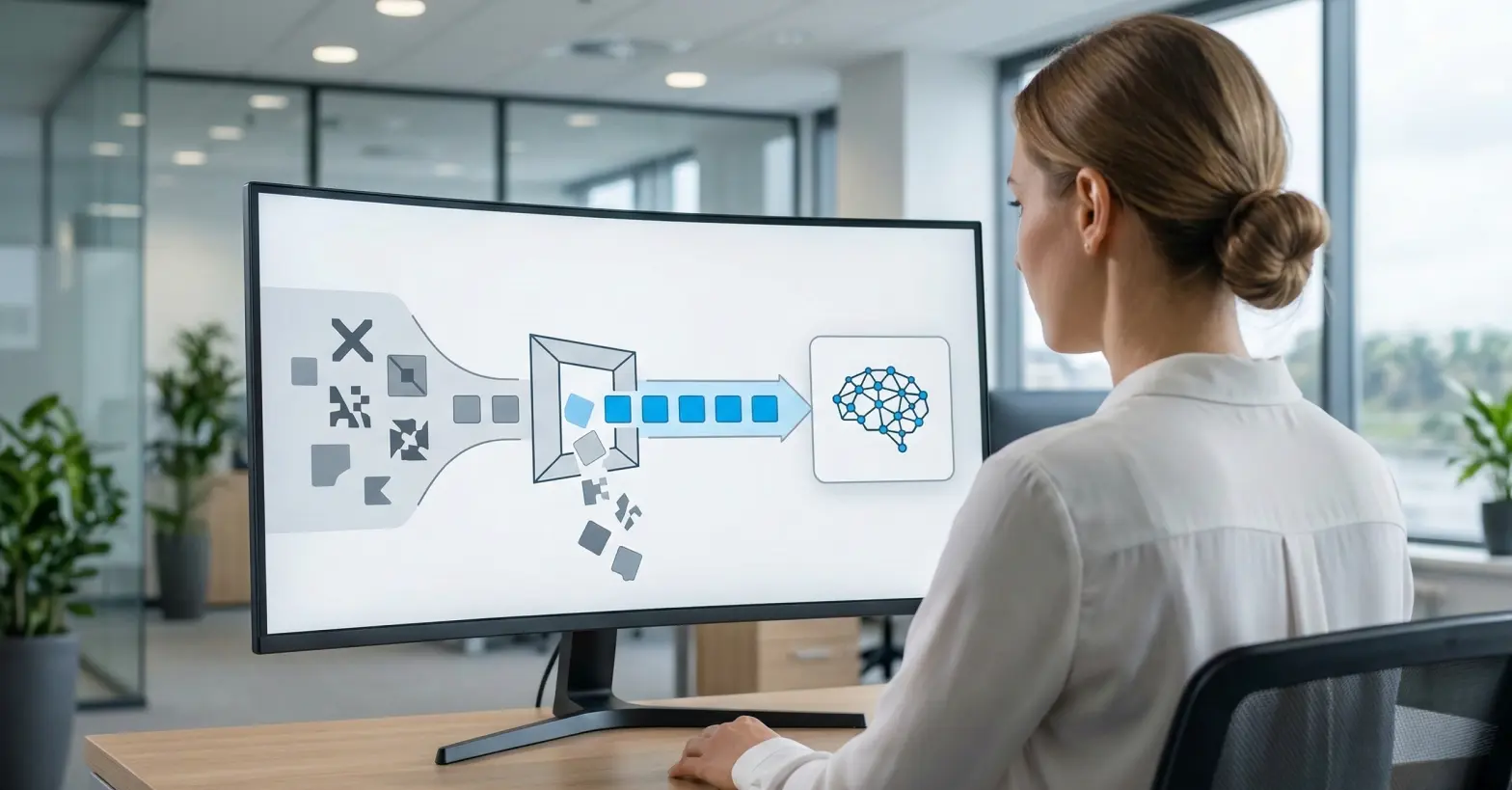

The distinction between these data types is no longer merely a technical detail regarding file extensions; it is the fundamental barrier to Artificial Intelligence. To succeed in the coming decade, leaders must bridge the gap between the semantic ambiguity of the unstructured world and the governance required by the enterprise.

Key takeaways

- Don’t ignore “dark data”: If you only analyze your structured databases, you are missing the insights hidden in your documents and communications.

- Adopt “schema-on-read”: Move faster by ingesting raw data now and defining the schema later when you analyze it, rather than blocking data at the door.

- Automate governance: Manual tagging is impossible at this scale. Use automated discovery tools (like Murdio’s Collibra + Ohalo stack) to map sensitive data across both worlds.

What exactly is structured data?

Structured data is the digital equivalent of a perfectly organized filing cabinet, where every folder is labeled and every document follows a strict template. It is quantitative, highly organized information that resides comfortably within the fixed fields (rows and columns) of a Relational Database Management System (RDBMS).

- The “Lego” metaphor: industry experts often liken structured data to a Lego brick. It is a standardized unit with precise dimensions that fits perfectly with other bricks because they share a universal interface. You cannot force a piece of clay into a Lego castle; the system is built to reject anything that does not fit the pre-defined shape.

- Schema-on-write: this rigidity is enforced through a mechanism called Schema-on-Write, where the system acts as a gatekeeper. Before data enters the database, it is validated against a strict rulebook – if an “Inventory” field expects a number but receives text, the entry is rejected to maintain the integrity of the data.

- Common use cases: because of its reliability, structured data is the standard for high-stakes Systems of Record, such as financial General Ledgers, inventory SKU (Stock Keeping Unit) trackers, and CRM customer profiles where accuracy is non-negotiable.

What defines unstructured data?

Unstructured data is qualitative information that lacks a predefined data model or internal organizational structure. It represents the “native format” of human communication and sensory observation – raw text, audio, video, and images – stored exactly as it was created.

- The “clay” metaphor: if structured data is a Lego brick, unstructured data is water or clay. It is amorphous and fluid, taking the shape of whatever container holds it – whether that is a file server or a cloud bucket – without any inherent structure visible to a standard computer program.

- Schema-on-read: this fluidity is enabled by a principle called Schema-on-Read. Unlike a structured database that rejects data that doesn’t fit the rules, an unstructured repository acts as a passive receptacle that accepts data without judgment or validation.

- The “dark data” reality: because the data wasn’t organized upon entry, it frequently becomes “dark data” – information that an organization collects but fails to utilize for insight. These assets often sit in “data swamps,” invisible to the IT department until specific tools are used to locate them.

Learn more about the challenges of unstructured data discovery here.

What are some real-world examples of unstructured data?

While structured data is often buried in back-office databases, unstructured data is the medium of daily business life, encompassing the documents we write, the messages we send, and the media we consume.

| Category |

Examples |

Description |

| Human-generated text |

Email threads, Slack/Teams logs, social media posts |

Rich in semantics, sarcasm, and emotion, but filled with slang and lacking formal syntax. |

| Business documents |

PDF contracts, invoices, Word documents, presentations |

Often trapped in proprietary formats that require specific software to open and read. |

| Machine-generated media |

Satellite imagery, surveillance video, thermal sensor logs |

High-volume streams of pixels or binary data that are unintelligible without advanced processing. |

| Audio data |

Call center recordings, voice notes, dictation |

Contains critical sentiment and intent data but exists purely as sound waves until transcribed. |

It is important to note that these files are often wrapped in a layer of structured metadata.

For example, an email has a structured “From” address and “Date,” but the body text – containing the actual business context and intent – remains unstructured and opaque to traditional analysis.

Where does semi-structured data fit in?

Sitting between the rigid world of databases and the chaotic world of raw text is semi-structured data. This format does not reside in a fixed table, but unlike unstructured data, it is not devoid of organization; instead, it uses semantic tags or markers to separate and label specific elements.

- The “self-describing” concept: while a structured CSV file requires a separate header row to tell you what “Column 3” is, semi-structured data carries its own instructions. For example, in a JSON file, the data explicitly labels itself: {“name”: “John”, “role”: “Admin”}. This allows the computer to understand the context of the data without needing a pre-existing schema.

- The API economy: this flexibility makes semi-structured data the preferred language of the modern web and the API economy. When different software systems talk to each other (like a mobile app talking to a server), they typically exchange information in JSON or XML format because it is lightweight and adaptable.

- Hierarchical depth: unlike the flat, two-dimensional grid of a spreadsheet, semi-structured data can be nested/hierarchical. An “Order” object can contain a list of “Items,” which can contain a list of “Parts,” infinitely deep. This makes it the ideal format for NoSQL databases (like MongoDB) that power dynamic web applications.

What is the main difference between structured and unstructured data?

The fundamental difference lies in the Data Model: structured data is “model-first,” requiring a rigid schema before it can be stored, while unstructured data is “content-first,” stored in its native format without any predefined structure.

This distinction dictates not just how the data is stored, but how quickly an organization can extract value from it.

This divergence has driven the evolution of enterprise architecture:

- The past (data warehouses): built specifically for structured data, these systems rely on expensive, high-performance storage and require complex ETL (Extract, Transform, Load) pipelines to clean and validate data before it ever enters the system.

- The present (data lakes): emerged to handle the massive volume of unstructured files, utilizing cheap object storage (like AWS S3) and an ELT (Extract, Load, Transform) approach where data is dumped immediately and processed only when needed.

- The future (data lakehouses): the modern architectural standard that attempts to merge these worlds, combining the strict governance of a warehouse with the massive scale and flexibility of a lake to support all data types on a single platform.

Table: The Data Spectrum Comparison

| Feature |

Structured Data |

Unstructured Data |

| Metaphor |

Lego bricks (rigid, modular) |

Clay or water (fluid, amorphous) |

| Data Model |

Relational (rows & columns) |

None (raw stream/native format) |

| Searchability |

High (easy to query via SQL) |

Low (requires AI/vector search) |

| Processing |

Schema-on-write (validate first) |

Schema-on-read (validate later) |

| Storage Cost |

High (remium compute) |

Low (commodity Storage) |

| Volume Share |

~10-20% of enterprise data |

~80-90% of enterprise data (Source) |

| Primary Risk |

Security (theft of financial records) |

Compliance (hidden PII/IP – Intellectual Property) |

How is AI changing the unstructured landscape?

The most significant shift in the data landscape is the rise of Generative AI. For fifty years, the primary goal of IT was to convert unstructured data into structured formats so computers could understand it.

Today, thanks to Large Language Models (LLMs), computers can natively “understand” the chaos of unstructured text and media.

The engine driving this capability is a technology called vector embeddings. Neural networks now have the ability to take a unit of unstructured data – whether it is a sentence from a contract, a pixel arrangement in an image, or a sound wave – and map it to a specific point in a high-dimensional vector space.

By calculating the mathematical “distance” between these points, systems can identify semantic relationships that traditional keyword searches miss. For instance, a search for “canine” will instantly retrieve documents containing “dog” because the system understands the concepts are related, even if the words differ.

To manage this new form of knowledge, organizations are deploying vector databases which index meanings rather than just text strings.

This semantic infrastructure has enabled the “killer app” for unstructured data in 2025: Retrieval-Augmented Generation (RAG). RAG architectures allow employees to effectively “chat” with their internal archives.

When a user asks a question, the system retrieves the most relevant paragraphs from thousands of unstructured PDF policies or emails and feeds them to an LLM to generate an accurate, summarized answer. This solves the infamous AI “hallucination” problem by strictly grounding the model’s responses in the company’s actual data.

However, this capability introduces a critical new requirement: data quality. You cannot build a reliable AI on “junk” data. If you feed your vector database outdated contracts or incorrect procedure manuals, your AI will confidently provide wrong answers. Before ingesting this data, it must be rigorously labeled and filtered to ensure that the “clay” you are molding is actually usable.

Read how to approach unstructured data classification.

Why is governance the biggest challenge?

Governance is the single most difficult aspect of managing unstructured data because the very flexibility that makes it useful – its lack of structure – also makes it a perfect hiding place for risk.

Unlike a database where sensitive information is contained in a specific, known column (e.g., “SSN”), unstructured data scatters sensitive assets across millions of files without any map to locate them.

- The compliance minefield: Regulations like GDPR and CCPA (California Consumer Privacy Act) grant individuals the “Right to be Forgotten.” In a structured environment, complying with this request is a simple matter of running a DELETE query on a specific row. In an unstructured environment, it is a forensic nightmare. A customer’s name might exist in a scanned PDF invoice, an email thread, a voice recording, or a casual mention in a meeting transcript. If you cannot find every instance of that data, you cannot remain compliant, exposing the organization to massive fines.

- The ransomware target: Cybercriminals are acutely aware that the “crown jewels” of an organization – intellectual property, trade secrets, and strategic plans – live in unstructured documents rather than transactional databases. Because these file shares are often less guarded and harder to monitor than core databases, they have become a primary target for ransomware attacks that can paralyze a business.

- The cataloging gap: Most traditional data catalogs were designed for the structured world. They excel at scanning SQL schemas but go blind when facing a file server. They might register that a “Contract.pdf” exists, but they cannot tell you if that contract contains a credit card number or a highly confidential trade secret. This creates a dangerous blind spot where the majority of your data remains ungoverned and invisible to security teams.

Explore best practices for unstructured data cataloging here.

How Murdio can help you govern the chaos

The future belongs to organizations that can master the “Hybrid” workflow – simultaneously managing the strict precision of structured databases alongside the vast, fluid ocean of unstructured AI assets.

To turn a “data swamp” into a functional “data lakehouse,” you need more than just storage; you need rigorous, automated cataloging that spans both worlds.

Murdio bridges this gap by implementing the unified Collibra + Ohalo (Data X-Ray) stack. While traditional governance tools often stop at the database level, our approach deploys Ohalo as the “eyes” of your infrastructure.

It scans deep into unstructured repositories – reading through Word documents, PDFs, and images – to automatically detect and classify sensitive data. We then integrate this intelligence directly into Collibra, creating a “Single Pane of Glass” where a PDF contract is governed with the same visibility and rigor as a SQL table.

This capability was critical for a major European Bank that recently partnered with Murdio. The bank was struggling with GDPR compliance, as critical PII was hidden across thousands of unmapped documents, effectively invisible to their risk teams.

By implementing the Collibra + Ohalo solution, Murdio automated the discovery of this hidden data, mapping PII from static files directly into the governance catalog.

This transformation allowed the bank to achieve full regulatory visibility, effectively turning a massive compliance liability into a governed, searchable asset.

Ready to bring structure to your unstructured world? Contact the Murdio implementation team today to audit your data governance maturity and start building a foundation for secure AI.

Frequently Asked Questions