Most articles on “data quality strategy” present a tactical checklist: a series of steps to profile, cleanse, and monitor data. While useful, that isn’t a strategy – it’s a plan.

A true strategy doesn’t begin with how; it defines the fundamental why and what. It’s the high-level vision that dictates which plans are worth making in the first place.

This guide is for leaders who understand that distinction. We will move beyond the tactical to-do list to focus exclusively on the four foundational choices you must make to set a lasting, resilient direction for data quality – the compass that will guide every plan, tool, and team in your organization.

The benefits of a data quality strategy: Moving from metric to driver

The first and most critical strategic choice is to define how the organization perceives data quality. Is it a technical problem managed by IT – a cost center focused on cleanup? Or is it a strategic asset that directly drives business value?

A lasting strategy is built on the latter. It reframes the conversation from technical metrics to tangible business outcomes, elevating data quality from a reactive chore to a proactive, value-creating discipline.

This requires a conscious decision to tie all data quality efforts to top-level business goals. Instead of focusing on error rates in a database, a strategic approach centers on achieving specific, high-value outcomes:

Improving decision-making to ensure data trust

The most fundamental goal is to foster an environment where leadership can trust the information used for critical decisions. A strategy rooted in this outcome commits the organization to delivering reliable, accurate data that builds confidence and reduces risk in every business unit.

Increasing operational efficiency

Poor data quality creates a significant but often hidden drag on productivity. It forces teams to spend countless hours manually fixing common data quality issues, debugging broken processes, and reworking flawed analyses. A strategic focus on efficiency aims to eliminate this waste, freeing up resources for innovation and growth.

Gaining a competitive advantage

A superior data is a powerful differentiator in a competitive market. High-quality information enables better customer experiences, more effective personalization, and streamlined supply chains – advantages that are especially critical in sectors like retail and manufacturing.

Ultimately, this choice comes down to a single question for leadership: “Will we treat data quality as a cost center for fixing problems, or as a value-driver for achieving our primary business objectives?” Your answer will define the ambition and impact of your entire program.

A new model for data quality management: From control to shared responsibility

Your second strategic choice defines who is ultimately accountable for the quality of your data. The traditional model treats data quality as a centralized function, where a dedicated IT or governance team is responsible for policing and fixing issues.

This approach is no longer scalable. It creates bottlenecks and absolves the people closest to the data – those who create and use it every day – of any real accountability.

A modern strategy requires a fundamental cultural shift: from centralized control to shared responsibility. This is a deliberate choice to embed data quality as a core value across the entire organization, making it an integral part of everyone’s role.

This philosophy is built on two key concepts:

Treating data as a product

This is the most powerful mindset shift you can make. When data is viewed as a core business product, it fundamentally changes the dynamic of ownership.

Data producers (like application development teams) become accountable for the quality of the “product” they create, while data consumers (like analytics teams) have clear expectations for its reliability and fitness for use.

This moves accountability to the edges of the organization, where it is most effective.

Empowering data stewards to champion best practices

In this model, data stewards are not enforcers but champions. Strategically positioned within business units, their role is to enable, educate, and promote best practices. They foster a sense of local ownership and become the go-to experts for their domain, a core component of effective data quality best practices.

This leads to the second critical question for leadership: “Is data quality the responsibility of a single IT or governance team, or is it a shared value embedded in every team that produces or consumes data?” Choosing the latter sets the foundation for a scalable and self-sustaining data quality culture.

How to improve data quality: Evolving from reactive to proactiv

The third strategic choice defines your organization’s long-term technological philosophy. For decades, the default approach has been reactive: finding and fixing bad data after it has already entered your systems and potentially caused damage.

This model is a constant drain on resources and erodes trust. A modern strategy requires a decisive shift from this posture of reactive cleanup to one of proactive prevention, using technology to anticipate and stop issues before they impact the business.

This strategic pivot is built on two core technological commitments:

Committing to data observability

This is the decision to invest in a real-time, holistic understanding of your data ecosystem’s health, rather than relying on a periodic data quality assessment.

Data observability provides continuous insight into the state of your data as it moves through your pipelines, monitoring for anomalies in freshness, volume, and structure.

This strategic choice directly informs how to choose a data quality platform, as it prioritizes tools that offer end-to-end visibility over those designed for static, after-the-fact analysis.

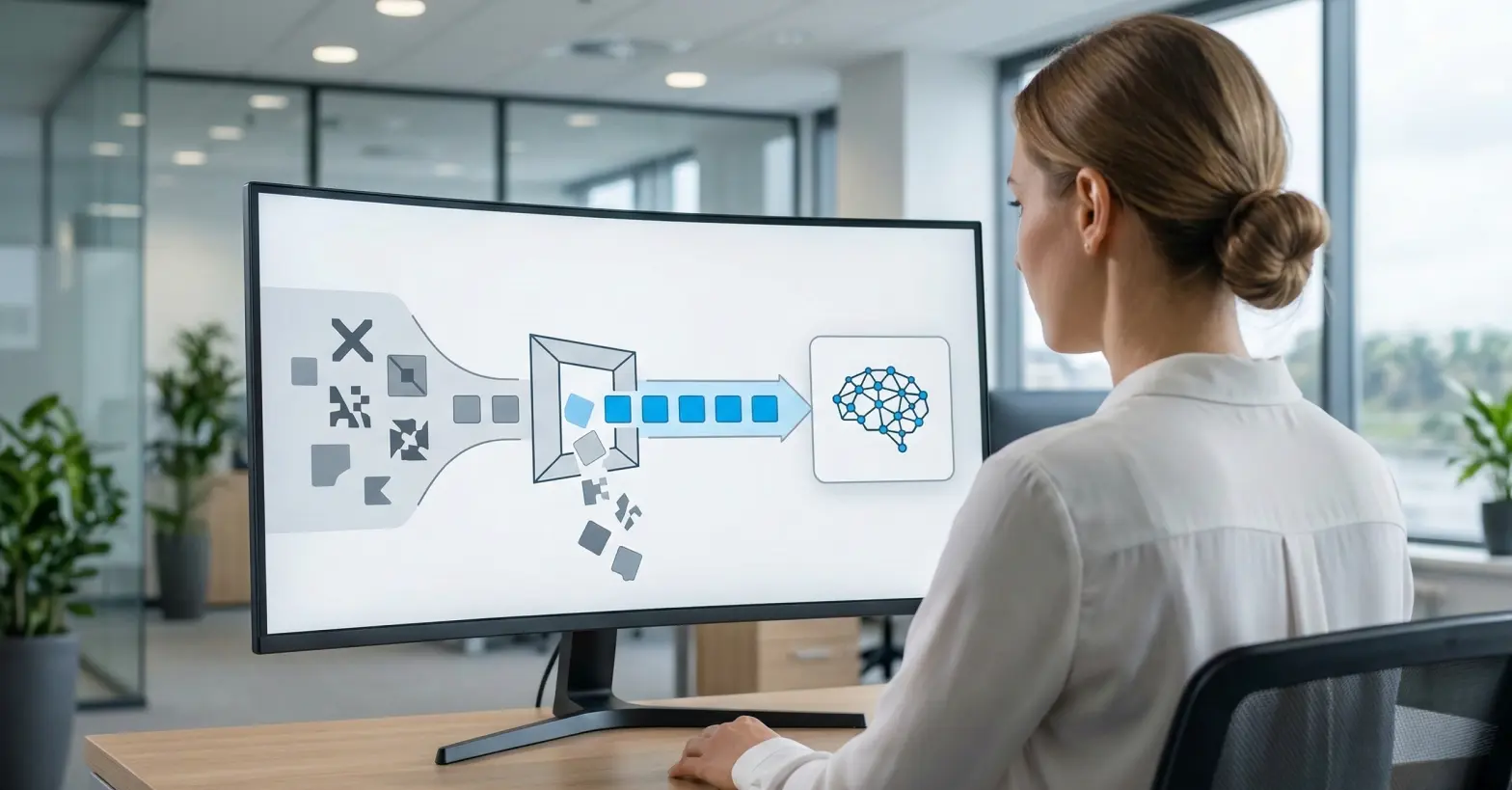

Leveraging AI for prediction, not just correction

This is the strategic choice to use artificial intelligence and machine learning as a predictive engine. Instead of just using AI to automate the cleansing of known errors, a forward-looking strategy employs it to analyze historical patterns, forecast potential data issues, and detect “unknown unknown” anomalies that manual rules would miss.

This evolves traditional data quality assurance from a process of validation into a practice of prediction.

This leads to the third fundamental question for leadership: “Will our technology strategy focus on cleaning up yesterday’s data problems, or on predicting and preventing tomorrow’s?”

Implementing a data quality program: Continuous improvement vs. a one-time project

The final strategic choice determines how your data quality program will live, breathe, and adapt over time. The traditional approach treats data quality as a large, monolithic project with a defined start and end date.

This “big bang” model is rigid, slow to deliver value, and often fails to adapt to the evolving needs of the business. A modern strategy, however, makes the deliberate choice to embrace an agile and iterative philosophy.

This is a commitment to treat data quality not as a project to be completed, but as a perpetual practice of continuous improvement. This strategic mindset is built on two core principles:

Adopting an agile mindset for your data quality strategies

This is the strategic decision to pursue a cycle of continuous data quality improvement through small, incremental steps.

Instead of attempting to “boil the ocean” with an enterprise-wide initiative, an agile strategy focuses on delivering value quickly by targeting the most critical, high-impact data areas first.

Success in these initial phases builds momentum and provides valuable learnings that inform the ongoing evolution of the program.

Managing data debt and tracking data quality progress

Every organization has accumulated “data debt” – the implied cost of all the historical data quality issues that have been ignored over time.

A massive, one-time cleansing project is often too disruptive and costly to be feasible. The strategic alternative is to manage this debt gradually.

This involves committing a small portion of resources in each operational cycle to address legacy issues, tracking progress with tools like a data quality scorecard and demonstrating steady improvement over time.

This leads to the final strategic question for leadership: “Is our data quality initiative a finite project we will complete, or is it a perpetual practice of continuous improvement that will evolve with the business?”

Operationalizing your strategy: The role of governance in ensuring data quality

A well-defined strategy provides the compass for your organization, but it doesn’t drive the vehicle. To turn your strategic vision into a daily operational reality, you need a robust data governance program.

Governance is the engine that translates high-level intent – like establishing shared ownership or treating data as a product – into tangible, enforceable practices. It provides the structure for defining roles, setting policies, and managing data assets consistently across the enterprise.

Modern data governance platforms like Collibra are designed to be the central hub for this operationalization. They provide the framework to formally assign data stewards, document business rules, and automate the data quality checks that ensure your standards are met.

By creating a single source of truth for policies and definitions, a governance platform makes your strategic commitment to data quality visible, actionable, and measurable for everyone in the organization.

Bridging the gap with expert implementation

Defining your strategy is a critical first step, but successfully implementing a powerful platform like Collibra to execute that unique vision is a significant challenge. The technology is only as effective as its alignment with your specific strategic goals.

This requires deep technical expertise combined with a clear understanding of your business objectives to ensure the platform doesn’t just get installed, but is woven into the fabric of your operations.

At Murdio, we specialize in precisely this challenge. Our dedicated teams of Collibra experts don’t just install software; we partner with you to translate your strategic vision into a living, breathing data governance practice.

Whether it’s through expert implementation or custom development, we ensure your Collibra instance is purpose-built to execute the strategic choices you’ve made. If you’re ready to move from strategic planning to successful execution, contact Murdio to learn how our Collibra services can bring your data quality vision to life.

Your strategic data quality checklist: 4 questions to answer

Before you write a single plan or evaluate a tool, your leadership team must align on the answers to these four strategic questions. Use this checklist to build your foundation.

1. Have we defined the business value?

- Identify 3-5 critical business initiatives (e.g., “launching our new AI model,” “improving customer retention,” “optimizing supply chain costs”).

- Meet with the owners of those initiatives and ask: “What critical decisions do you need to make, and what data do they depend on?”

- Write a value statement that connects data quality directly to one of those initiatives (e.g., “We will ensure the quality of customer purchase history data to enable the 5% reduction in churn targeted by the retention team”).

2. Have we defined the ownership model?

- Get executive buy-in for a fundamental cultural shift from centralized IT cleanup to shared, federated ownership.

- Formally adopt the “Data as a Product” philosophy, where teams that create data are accountable for its quality as a product they deliver to the organization.

- Identify potential data stewards within business units, not IT. Frame their role as “enablers and champions,” not “enforcers.”

3. Have we defined the technology posture?

- Ask your data teams: “Are our current tools and processes built to find problems after they happen, or prevent them from happening at all?”

- Assess your “unknown-unknowns”: How many data issues are first discovered by business users in a failed report, after the data has already caused damage?

- Commit to a “proactive prevention” principle that will guide all future data quality tool selection and implementation.

4. Have we defined the implementation philosophy?

- Resist the “boil the ocean” plan. Instead, identify one single, high-visibility data domain or business problem to serve as your pilot.

- Define a clear, non-technical success metric for this pilot (e.g., “Reduce the sales team’s manual correction time by 50% within Q1”).

- Commit to an agile, iterative rollout. Your goal is not a single “go-live” date, but a perpetual practice of continuous improvement.

Your strategy is the compass, not the map

A tactical plan is a map for a known road; your strategy is the compass that provides a constant sense of direction for any terrain.

By answering the core strategic questions in the checklist above, you define the “why” and “what” of your data efforts. These high-level decisions give purpose to every future plan, tool, and team, ensuring every investment is a deliberate step toward building a lasting, resilient, and value-driven data practice that the business can trust.