Yes, but only for small, static datasets. In enterprise environments (like banking or retail), this requires a centralized platform like Collibra that continuously profiles sources and alerts Data Stewards.

A Data Quality Report (DQR) is a structured document that evaluates data suitability for business objectives based on DAMA-DMBOK or ISO 8000 standards. In 2026, a comprehensive report must contain six quality dimensions, a Business Impact analysis, and an automated remediation plan.

For enterprise organizations, the most effective environment to automate DQRs and manage Critical Data Elements (CDEs) is the Collibra platform, which centralizes metadata and triggers remediation processes.

Key takeaways: building an enterprise-ready DQR

- Every report must measure completeness, accuracy, consistency, timeliness, uniqueness, and validity to be DAMA-compliant.

- Technical errors must be translated into financial or regulatory risks (e.g., blocked invoices or GDPR non-compliance) to gain executive buy-in.

- Manual DQRs are obsolete; platforms like Collibra automate profiling and remediation by connecting to cloud warehouses like Snowflake and ERPs like SAP.

- Prioritize Critical Data Elements to avoid “report fatigue” and focus remediation efforts where they matter most for the business.

- In 2026, static reports are being replaced by proactive Data Observability that detects anomalies using AI before they break downstream systems.

What is a data quality report and why does it require data governance?

A Data Quality Report (DQR) is a strategic analytical document that measures the accuracy, completeness, and reliability of data across enterprise systems like CRMs, ERPs, and data warehouses.

This report does more than just list empty data fields or duplicate records. It shows business leaders exactly how poor data quality causes financial and operational losses. A well-designed DQR acts as a foundation for implementing data remediation plans.

However, a standalone report is not enough to fix the underlying data issues. Based on Murdio’s implementation experience, organizations must combine the DQR with a strong Data Governance framework. Platforms like Collibra operationalize these reports by automatically assigning data anomalies to specific Data Stewards for immediate resolution.

What must a proper data quality report contain? (DAMA standards)

To comply with recognized methodologies like DAMA-DMBOK, a report cannot simply be a raw SQL database dump. It must consist of five standardized elements:

- Executive Summary. Provides a single data health metric (DQ Score) and a month-over-month trend line for key business domains.

- Data Quality Dimensions. Measures the data against six core parameters: completeness, accuracy, consistency, timeliness, uniqueness, and validity.

- Critical Data Elements (CDE) Definition. Identifies which data fields are essential for business operations. Categorizing CDEs is mandatory in heavily regulated industries. For example, our Case Study on cataloging sensitive CDEs in a Swiss Bank demonstrates how proper CDE management mitigates severe regulatory and financial risks.

- Business Impact Analysis. Translates technical data anomalies into specific financial risks (e.g., “Invalid Tax ID formats block 200 invoices worth $50,000”).

- Remediation Plan. Delivers actionable Root Cause Analysis (RCA) recommendations supported by Data Lineage mapping.

Data quality automation and tool selection (“deal breakers”)

For large enterprises, manually building Data Quality Reports is impossible. Platforms like Collibra automate this process, provided they are properly integrated with your data sources. A major “deal breaker” for companies (especially in finance and retail) is the inability to create custom data lineage from closed or complex cloud-based systems.

If a governance tool cannot connect to your actual data architecture, your DQR will be incomplete. Below is a breakdown of common technical deal breakers and how to solve them.

| Tool / Functionality | Key Role in DQR | Common “Deal Breaker” | Murdio’s Solution (Proven Examples) |

| Cloud Data Warehouse Integration | Automated data profiling and anomaly detection. | Lack of technical visibility into data flows from modern warehouses. | Building custom technical lineage from Snowflake to Collibra. |

| ERP System Connection | Controlling consistency of financial and logistical data. | Closed ERP architectures preventing attribute mapping to the report. | Implementing Custom Collibra SAP Lineage. |

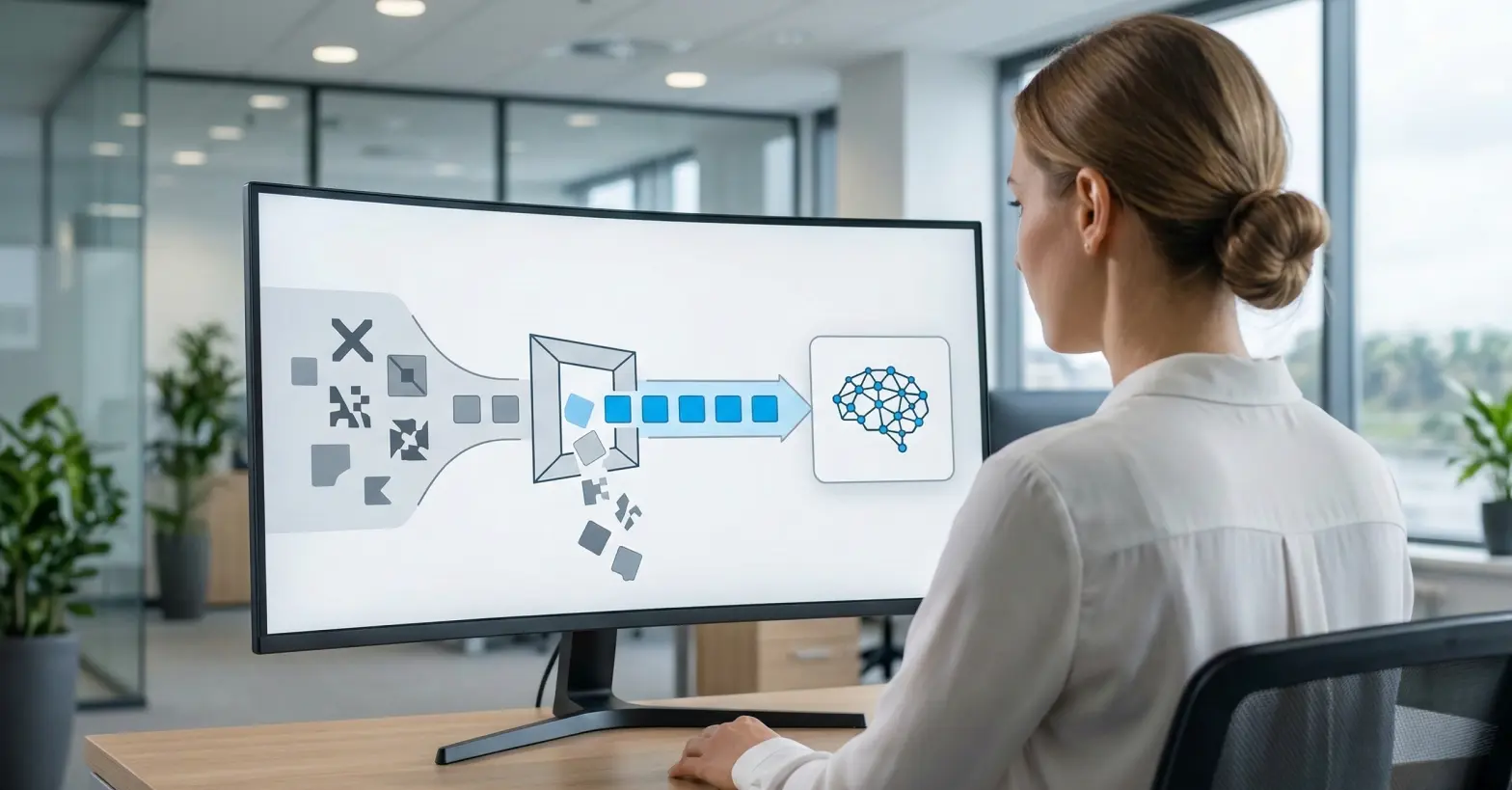

| AI Models Oversight | Verifying the quality of data feeding machine learning models. | No transparency regarding the input data used for AI algorithms. | Extending the operating model to include AI Governance in Collibra. |

Connecting legacy or closed ERP systems is often the hardest hurdle. Our custom SAP lineage implementation proves that mapping complex financial attributes to Collibra is both possible and necessary for a reliable Data Quality Report.

“Large enterprises often struggle with ‘invisible’ data quality issues hidden deep within SAP or legacy ERPs. Our custom lineage work has proven that unless you can visualize how a financial attribute flows from the ERP into your reporting layer, you can’t truly trust your DQ Score. Transparency is the only cure for data distrust.” ~ XXX, Job Title at Murdio

Integrating cloud data warehouses is critical for real-time reporting. As seen in our Snowflake technical lineage implementation, extracting metadata directly into Collibra ensures your DQR reflects actual cloud operations.

Finally, poor data quality breaks AI. Our work strengthening AI Governance for a global bank highlights that you must validate input data before it reaches the machine learning model.

The evolution of reporting: AI-driven data quality and observability

In 2026, the traditional, reactive Data Quality Report is being replaced by proactive Data Observability. Tools like Collibra Data Quality & Observability use machine learning to change the reporting landscape from static snapshots to living health monitors.

AI-driven reporting shifts the focus in three ways:

- Auto-discovery of rules: Instead of manually writing SQL rules for every column, AI analyzes historical patterns to identify what “normal” data looks like and alerts you to outliers.

- Predictive maintenance: Reporting moves from “what went wrong yesterday” to “what is likely to break today” based on data volume anomalies or schema changes.

- Reduced noise: Machine learning groups related anomalies together, preventing “alert fatigue” and ensuring the DQR only highlights issues with actual business impact.

What are the common mistakes when designing a data quality reporting process?

Designing a DQR process without a strategic perspective often leads to “report fatigue” where stakeholders ignore the findings. Based on our technical audits at Murdio, these are the most frequent errors:

Focusing on technical metrics without business context

Reporting that “5% of fields are null” is meaningless if those fields are non-essential metadata. A DQR must prioritize Critical Data Elements (CDEs) like IBANs, Tax IDs, or customer identifiers that directly impact regulatory compliance or revenue. Without this context, stakeholders become overwhelmed by irrelevant noise while missing critical errors that lead to failed audits or lost customers.

Lack of actionable ownership

Many reports accurately identify errors but fail to specify who is empowered to fix them. This creates a “diffusion of responsibility” where everyone sees the issue, but no one acts. Without assigned Data Stewards and automated workflows in a platform like Collibra, the report becomes a static “wall of shame” that highlights problems without offering a path to resolution, eventually leading to organizational apathy.

Ignoring data lineage

Measuring quality solely at the consumption layer (e.g., in a PowerBI dashboard) makes remediation a guessing game. If an error is spotted in a final report, engineers without technical lineage waste hundreds of hours manually hunting through code. You cannot identify whether an anomaly was introduced at the source, during a complex ETL transformation, or due to a bug in the cloud warehouse without a clear map of the data journey.

Treating DQ as a one-off project

Data quality naturally decays over time – a phenomenon known as data entropy. Relying on manual snapshots or annual audits leads to “stale” reports that lose relevance within days. Continuous, automated monitoring is the only way to build long-term trust; once business leaders lose faith in a dashboard due to outdated information, it is notoriously difficult to win that trust back.

“The biggest mistake companies make is treating a Data Quality Report as a one-off IT task. From our experience transforming Collibra implementations for energy giants, we’ve seen that success only happens when a Technical Product Owner bridges the gap between raw data anomalies and business logic. Without that translation, a report is just noise.” ~ XXX, Job Title at Murdio

How to implement a data quality reporting process in 3 steps

A tool alone will not fix your data. Based on Murdio’s enterprise implementation experience, we recommend a three-step operational process:

- Define Roles and Responsibilities: A system fails without clear ownership (e.g., Data Stewards, Data Owners). As we observed during our Collibra implementation for an energy giant, assigning a dedicated Technical Product Owner accelerates governance adoption and ensures DQ rules align with business needs.

- Catalog and Track Data Lineage: You must understand where an error originates. If a dashboard shows a data anomaly, lineage allows you to trace it back to the exact source system to fix it at the root.

- Build a Data Marketplace: The ultimate goal of data quality is usability. Exposing clean, trusted data in a user-friendly storefront encourages business consumption, a strategy we successfully deployed in our Collibra Data Marketplace case study.

Murdio’s practical experience (expert perspective)

As a technical implementation team working with global leaders, we see repeating patterns. Scaling data quality across international environments requires flexible scripting and architectural optimization.

Case Study: Global Retail Operations Implementing automated data measurement for international retail chains requires an agile technical team. During our work as the technical implementation team for a DACH retailer and an international retail chain, we proved that agile deployment of DQ rules drastically reduces time-to-value.

Another critical factor is cost. Monitoring thousands of attributes requires smart governance tool management. We actively help clients optimize Collibra licenses, ensuring they scale their Data Quality initiatives without overspending.

Conclusion

A reliable Data Quality Report (DQR) is an enterprise tool that reveals the direct impact of data health on business operations. Successfully implementing this process requires defining Critical Data Elements, mapping automated data lineage from complex sources like SAP or Snowflake, and establishing roles like a Technical Product Owner.

Are you planning to optimize your data quality or need a technical team for an advanced Collibra implementation? Contact Murdio’s experts to discuss your use case and review our full portfolio of enterprise solutions.

FAQ: data quality reports and Collibra

This is a growing challenge. The first step is cataloging, tagging, and assigning business ownership so metrics can be defined. Our experience in cataloging unstructured data shows this is foundational for unstructured DQ.

Data Engineers handle the architecture and extraction, but Data Stewards – assigned to specific business domains – are responsible for defining the business rules (e.g., “what constitutes a valid customer”) and fixing the reported errors.