Yes, provided you integrate classification layers that identify and redact PII/PHI during the enrichment phase. Modern pipelines use “Layout-Aware” parsing to ensure sensitive data is not lost or miscategorized in complex tables.

An unstructured data pipeline is an automated framework used to ingest, extract, and transform unformatted information – like PDFs, images, and audio – into searchable, structured formats. By 2026, over 175 zettabytes of data will exist globally, with 90% residing in unstructured formats. Mastering the unstructured data pipeline allows organizations to unlock this “dark data” to fuel strategic AI decision-making.

Key takeaways

- Implementing unstructured data cataloging early ensures that every document entering the AI pipeline has clear lineage and defined ownership.

- Automated unstructured data classification identifies and redacts sensitive PII/PHI during the processing phase, ensuring compliance-by-design for regulated industries.

- A governed pipeline enables “Factual Traceability,” allowing organizations to audit the exact source material used by an LLM to generate a specific response.

- Transitioning from experimental RAG to mission-critical applications requires “Fail-Fast” checkpoints to catch data corruption before it reaches the enterprise knowledge base.

Why is managing “dark data” the next big engineering challenge?

The global data ecosystem is navigating a period of exponential expansion, making traditional storage methods increasingly obsolete. According to IDC, the challenge lies in the sheer volume and variety of newly created information:

- Massive Scale: Approximately 80% to 90% of all data generated is unstructured.

- Diverse Formats: This includes everything from legal contracts and emails to sensor logs and video footage.

- Untapped Potential: Historically, businesses have treated these assets as a storage burden rather than a resource.

However, properly managed unstructured data offers immense benefits of unstructured data, such as surfacing deep consumer insights and automating high-stakes compliance audits.

At Murdio, we believe the transition from “experimental” AI to a production-ready knowledge assistant requires moving beyond treating documents as mere strings. Instead, they must be viewed as structured semantic assets. By building a robust pipeline, you shift your data strategy from passive storage to active intelligence, ensuring that your organization maintains digital sovereignty in an increasingly AI-driven market.

What is an unstructured data pipeline?

An unstructured data pipeline is a specialized engineering framework designed to ingest, process, and store information that lacks a predefined format, such as legal documents, audio recordings, and images. Unlike traditional data warehouses that handle neat, relational tables, these pipelines use advanced extraction techniques like OCR (Optical Character Recognition) and NLP (Natural Language Processing) to derive meaning from chaotic files.

The ultimate goal of unstructured data processing is to convert raw, “noisy” assets into high-fidelity structured formats – typically JSON or Markdown – that can be indexed by vector databases. This structural conversion is essential for modern AI applications, as it ensures that the “DNA” of the original content (like document geometry, table boundaries, and headers) is preserved for downstream analysis.

Why should you build an unstructured data pipeline for RAG?

Retrieval-Augmented Generation (RAG) relies entirely on the quality and relevance of the context provided to the Large Language Model (LLM). You should build an unstructured data pipeline to address these three critical factors:

- Context as a Bridge: RAG acts as a link between an LLM’s general knowledge and your organization’s specific, private data.

- Reducing Hallucinations: Without a reliable pipeline, the AI is forced to work with fragmented or irrelevant snippets, significantly increasing the risk of “hallucinations” – where the AI generates confident but factually incorrect answers.

- Operational Efficiency: A production-ready RAG system must enrich each data chunk with metadata. By optimizing this upstream preparation, you ensure the system retrieves precise sources, drastically reducing token usage and LLM inference costs.

How do you identify the right use case for your data?

To identify a successful use case, organizations must transition from an “extract everything” mindset to an outcome-focused strategy. Start by targeting high-impact areas where internal knowledge is currently trapped in silos. The most effective use cases typically involve high volumes of documents that require consistent, repeatable analysis:

- Legal and Compliance: Automating the extraction of specific clauses from thousands of PDF contracts to mitigate operational risk.

- Customer Support: Building a production-ready knowledge assistant that references technical product manuals to provide instant, accurate troubleshooting.

- HR and Operations: Streamlining the processing of resumes, employee records, and policy updates to ensure consistent data availability.

Real-World Insight: Banking and Finance

In a high-stakes environment like banking, manual discovery is often a bottleneck. A leading European bank faced the challenge of managing sensitive PII (Personally Identifiable Information) hidden across thousands of legal files and emails. By leveraging a managed governance approach, they were able to automate the entire workflow.

For a detailed look at how this was achieved, see our Case Study: Cataloging Unstructured Data for a European Bank. By synchronizing technical metadata and automating discovery, the bank transformed these “dark” documents into governed, searchable, and trusted assets, significantly reducing their compliance risk.

What are the main steps in unstructured data processing?

Modern unstructured data processing follows a disciplined, multi-stage workflow. To build a system that remains reliable at scale, you must follow these four sequential steps:

- Ingestion and Discovery: Before processing begins, you must identify your assets through unstructured data discovery. This involves cataloging sources (like S3 buckets or local drives) and capturing metadata like source IDs and checksums to prevent data duplication.

- Layout-Aware Parsing: This is the most critical stage. Instead of simply stripping text, you must use “layout-aware” extraction to preserve document geometry. This ensures that tables, headers, and lists remain semantically connected rather than becoming a “word salad.”

- Semantic Chunking: Once parsed, text is divided into “chunks.” Effective chunking respects document boundaries (like paragraphs or sections) to ensure each unit remains a coherent, independent thought for the AI to digest.

- Contextual Enrichment: Chunks are enriched with metadata – often called the “DNA” of the knowledge base. This includes adding summaries or tags that describe the chunk’s relationship to the overall document, making it easier for search engines to find.

How do you choose the right parsing tools for your budget?

Choosing the right tool for your unstructured data pipeline requires balancing structural fidelity with processing speed. In 2026, the market is defined by four industry leaders, each optimized for different enterprise needs.

| Tool | Processing Speed | Structural Fidelity | Best Use Case |

| Docling (IBM) | Moderate (Linear scaling) | Excellent (97.9% table accuracy) | Enterprise sustainability and complex financial reporting. |

| LlamaParse | High (Approx. 6s/document) | Human-level precision; Agentic OCR | Production-ready AI agents and high-throughput RAG. |

| Unstructured.io | Low (Up to 141s/50 pages) | Strong OCR; inconsistent complex tables | Open-source builders seeking a reliable ingestion layer. |

| Amazon Textract | High (Asynchronous model) | Strong form and relationship mapping | AWS-native ecosystems and high-throughput invoice processing. |

How do you optimize for cost and performance?

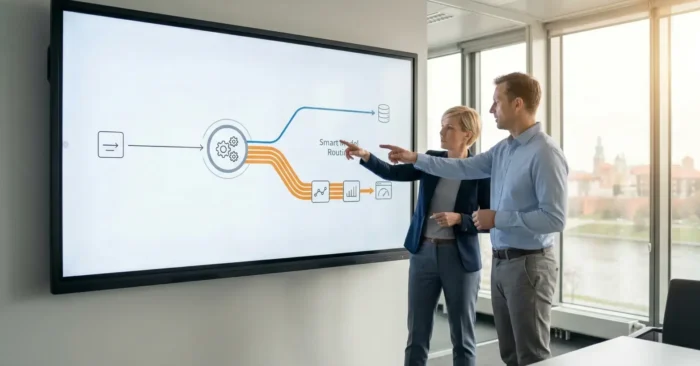

Optimizing your pipeline for 2026 involves a shift toward “FinOps” strategies for AI. Because RAG costs are deeply interconnected – where chunking affects embedding counts and prompt size – elite engineering teams deploy Smart Model Routing.

This technique routes routine queries to simple, fast models (like GPT-4o mini) while reserving complex reasoning for high-tier models (like GPT-4 Turbo). By implementing routing and embedding quantization (int8/float8), enterprises can reduce LLM inference costs by 60% to 80% without sacrificing performance on intricate tasks.

Why is governance critical for your pipeline?

Governance is the continuous layer that ensures your data remains secure, high-quality, and compliant. Without it, pipelines suffer from “silent corruption,” where corrupted or sensitive information is spread downstream without warning. A robust governance strategy relies on three pillars:

- Classification: You must implement unstructured data classification early to tag sensitive entities (PII/PHI) and apply redaction where necessary.

- Cataloging: Building an unstructured data cataloging system creates a reliable “Source of Truth,” allowing teams to trace the lineage of every AI-generated response.

- Validation: Automated “Fail-Fast” checkpoints verify that every extracted chunk meets business logic constraints before entering the vector store.

Master your data landscape with Murdio

A powerful unstructured data pipeline is only as valuable as the governance framework protecting it. At Murdio, we help enterprises bridge the gap between raw document and actionable intelligence.

As a specialized firm implementing Collibra data governance solutions, we provide dedicated implementation teams and custom Collibra development services. Whether you are building a production-ready knowledge assistant or securing a legacy knowledge base, our experts ensure your unstructured data is governed, cataloged, and audit-ready for the AI era.

Book a consultation to discuss your implementation journey.

Frequently Asked Questions (FAQ)

For commercial use cases, metadata should be refreshed every 30 to 60 days to maintain “freshness.” AI search models prioritize content that displays recent “Last Updated” signals in its code and metadata.

Yes, multimodal pipelines use specialized encoders to tokenize waveforms and pixel data, aligning them with textual context for unified retrieval.

Yes, the modern approach to ETL (Extract, Transform, Load) for unstructured data has evolved from custom coding to “agentic” extraction. By using automated tools, you can transform a 100-page PDF into a clean, structured JSON file that maps every table cell and section header with human-level precision. This specialized process is the essential foundation for how to convert unstructured data to structured data, allowing you to move from “reading files” to “querying facts” within a governed data warehouse.

Most pipelines are designed to handle diverse data sources, ranging from raw data in text files and PDF doc files to complex multimodal inputs like raw html scrapes. Effective data ingestion layers allow you to standardize these chaotic inputs into a uniform format, preparing them for the transformation into numerical representations.

The architecture of a modern rag application is centered on enhancing generative ai with private, internal context. By using built-in connectors to large language models like openai, the system retrieves relevant chunks of information and passes them through an api for synthesis. This ensures the output is both fluent and factually anchored in your specific, governed datasets.

Embeddings, or specifically vector embeddings, are the mathematical foundation of semantic search. When you process a document, the pipeline converts text into a high-dimensional vector via an api call. These embeddings allow the system to perform retrieval based on semantic similarity rather than simple keyword matching, as the “distance” between vectors determines how closely related two pieces of information are.

To ensure scalability, organizations must move beyond manual scripts and utilize automated orchestration for data ingestion. By choosing tools that standardize raw inputs into structured elements, you can process millions of documents without performance degradation. This allows your AI systems to remain responsive and accurate even as your enterprise knowledge base grows exponentially.